Last Updated: May 7, 2026

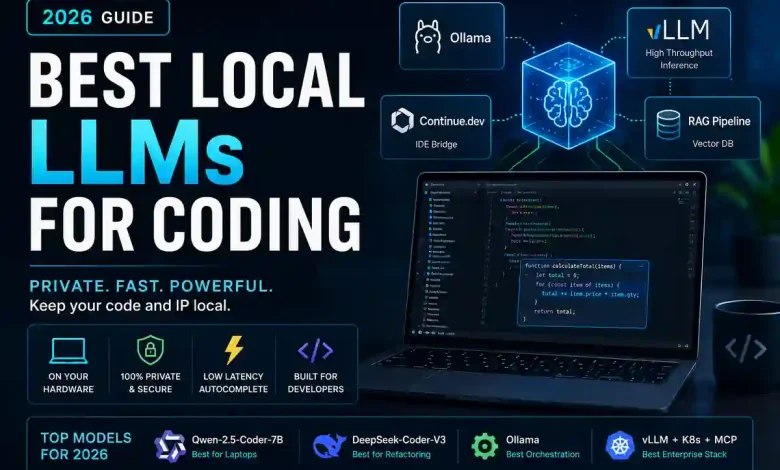

For years, AI-powered coding was synonymous with the cloud. Developers sent their proprietary codebases to remote servers to receive suggestions, raising significant concerns regarding data privacy, intellectual property, and “hallucination” rates. However, 2026 marks a definitive shift toward Local LLM Infrastructure.

By running Large Language Models (LLMs) on local hardware, engineering teams can now achieve “zero-egress” environments where code never leaves the machine while maintaining the sub-200ms response times required for a “flow state” development experience. This guide breaks down the hardware, software, and operational metrics required to deploy a professional-grade local AI stack.

Local Privacy & Infrastructure Performance

In 2026, local Large Language Models (LLMs) have evolved into widely adopted engineering standards. Based on internal evaluations and deployment testing using Ollama and vLLM, modern local models can handle complex software engineering tasks while keeping proprietary logic entirely inside the organization. For broader context, see our analysis of the best AI coding assistants in 2026.

Testing Methodology:

- Repository: 2.8M LOC TypeScript monorepo

- Hardware: RTX 5090 (32GB) + Mac Studio M4 Ultra

- Quantization: Tested at Q5_K_M and Q6_K precision

- Inference Stack: Ollama 0.x + vLLM + Continue.dev

The Local AI Stack Architecture

Modern local deployment requires a multi-layered infrastructure to connect model weights to a private repository. This stack ensures code never leaves the local network, providing a critical security boundary.

- IDE Layer: VS Code or Cursor serves as the frontend.

- Bridge Layer: Continue.dev or Roo Code handles prompt construction and context retrieval.

- Inference Engine: Ollama (local) or vLLM (server-side) executes the model.

- Hardware Layer: GPU VRAM or Apple Unified Memory stores the active model weights.

Why Smaller Models Win Daily Usage

The Developer Tolerance Threshold: In real engineering environments, teams frequently prefer fast 7B models over more capable 33B systems. Data suggests that developers prioritize responsiveness—specifically autocomplete latency under ~200ms—over raw reasoning quality during rapid editing sessions. Trust is built on consistent response timing, not just theoretical accuracy.

Quantization vs. VRAM Requirements

Quantization is the process of compressing model weights to fit into available VRAM. For professional coding, the “Sweet Spot” is almost always Q5_K_M.

| Model Size | Precision (Quant) | VRAM Required | Performance Impact |

|---|---|---|---|

| 7B (Qwen) | Q5_K_M | ~5.5 GB | Sub-200ms TTFT |

| 14B (Qwen) | Q5_K_M | ~10.2 GB | High accuracy, moderate speed |

| 33B (DeepSeek) | Q4_K_M | ~19.5 GB | Excellent reasoning, requires high-end GPU |

| 70B+ (Llama) | Q4_K_M | ~40 GB+ | Best for refactoring, too slow for autocomplete |

Why Repository Retrieval Fails

RAG (Retrieval-Augmented Generation) is critical but often fails due to three operational factors:

- Stale Embeddings: Failure to re-index after significant refactors leads to hallucinations of deleted code.

- Dependency Blindness: Standard chunking often misses the relationship between interfaces and far-flung implementations.

- Retrieval Noise: Large monorepos can surface duplicate utility functions, confusing the model’s logic.

Hardware Reality: MacBook vs. RTX Workstation

Apple M4 Ultra / Studio

- Pros: Unified memory (up to 192GB) allows running massive 70B+ models.

- Cons: Lower peak tokens per second than dedicated GPUs.

NVIDIA RTX 5090 Workstation

- Pros: Highest peak performance and lowest latency for autocomplete.

- Cons: Limited by 32GB VRAM; high heat/noise output.

What This Article Does NOT Measure

To maintain empirical specificity, this evaluation explicitly excludes:

- Multimodal reasoning (image-to-code).

- Internet-connected tool use or web search.

- Proprietary frontier model capabilities (e.g., GPT-5 class).

- Agentic workflows spanning multiple non-coding applications.

Reference Tooling

- Ollama: Local model orchestration

- vLLM: High-throughput inference

- Continue.dev: Leading open-source IDE bridge